-

Pl

chevron_right

Erlang Solutions: Erlang Solutions Webinar Round-Up

news.movim.eu / PlanetJabber • 8:12 • 2 minutes

Over the past few months, our team has been exploring what happens when systems come under pressure.

Through a series of webinars, we’ve looked at everything from concurrency in the BEAM to traffic spikes, real-time communication platforms, and resilient system design.

Maybe you’ve been following along, or maybe one or two of these webinars slipped past you. Either way, this is a chance to catch up on the ideas shaping how modern platforms are built and scaled.

Concurrency, Understanding the BEAM Limits

Modern systems can handle huge amounts of concurrent work. But sooner or later, every system reaches a point where performance starts to suffer.

In “Concurrency, Understanding the BEAM Limits”, Lorena Mireles Rivero explores how concurrency works inside the BEAM and where those limits begin to appear. Using examples from web applications and e-commerce platforms, she looks at how schedulers, mailboxes, CPU usage, and latency behave under load.

The session also explores common signs of system saturation and practical ways to keep applications running smoothly as demand grows.

Watch the webinar to learn more about concurrency in the BEAM and how to build systems that perform under pressure.

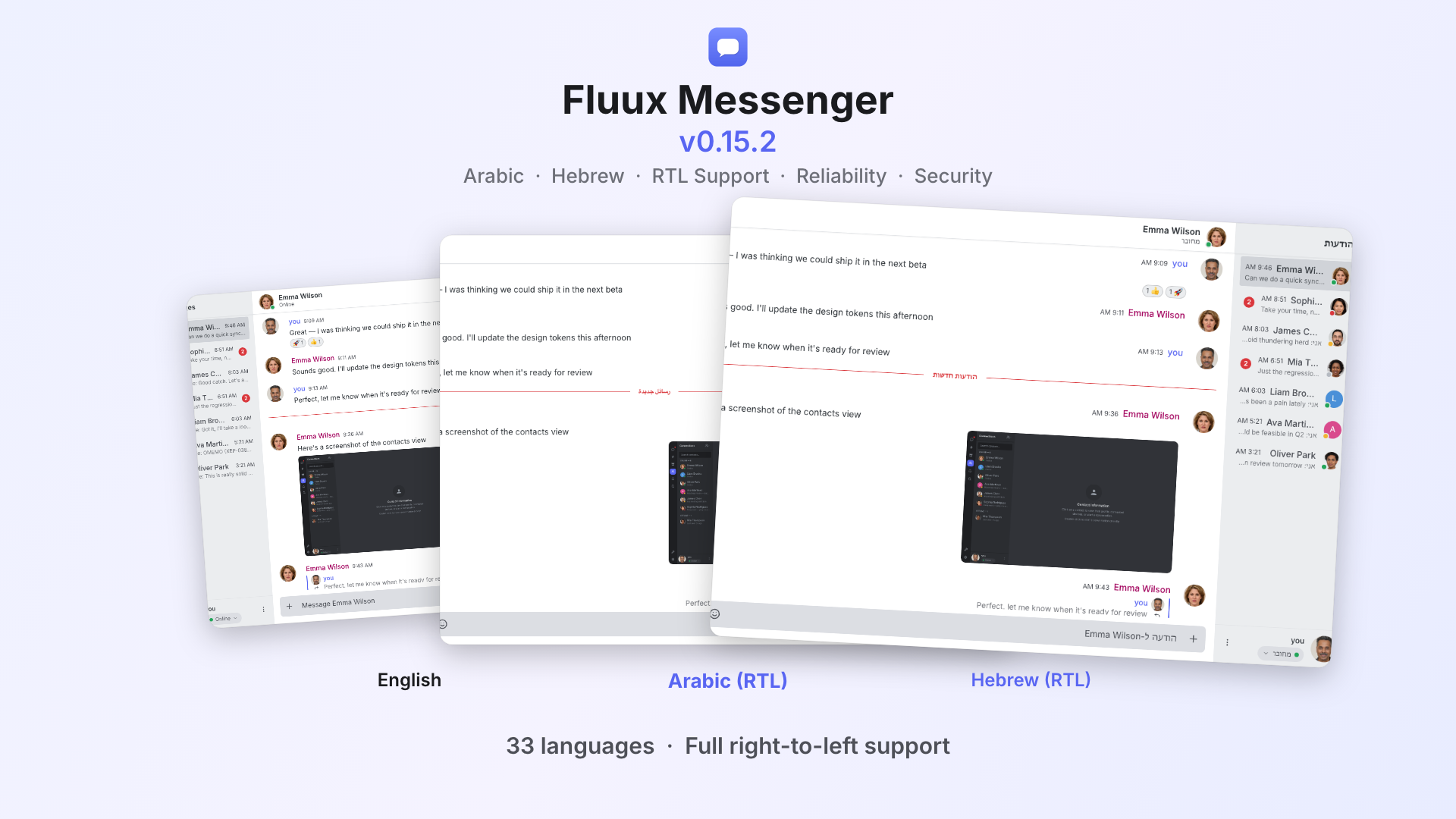

Keeping Real-Time Communication Platforms Online During Peak Demand

Real-time platforms don’t get a second chance. When demand spikes, messages still need to be delivered instantly and reliably.

In this webinar, Bartłomiej Górny explores what happens when systems are pushed to their limits. He looks at common bottlenecks, overloaded services, and how failures can spread across a platform when demand suddenly increases.

The session also covers practical approaches to scaling real-time systems, from service decoupling and back pressure to monitoring and load testing.

Watch the webinar to learn how real-time platforms can stay reliable during periods of peak demand.

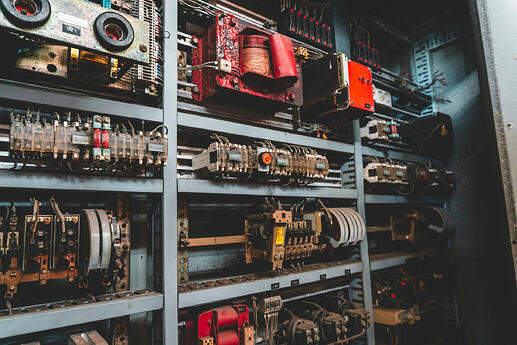

How to Build Systems That Stay Online When Everything Spikes

Traffic doesn’t always increase gradually. Sometimes it arrives all at once.

In this session, Camjar Djoweini explores what happens when systems come under sudden pressure and why failures can quickly spread across services. He looks at where problems typically start and what makes some architectures more resilient than others.

The webinar focuses on designing systems that can absorb spikes, tolerate failures, and continue operating when conditions become unpredictable.

Explore the webinar to learn more about building resilient systems that stay online under pressure.

How to Build Platforms That Don’t Let Audiences Down

For gaming, betting, and entertainment platforms, traffic spikes are part of everyday life. The challenge is making sure users never notice them.

In this webinar, Lee Sigauke explores why systems fail during sudden surges in demand and how teams can build platforms that remain reliable under pressure. Drawing on principles from transactional systems in Erlang and Elixir, she shows how concurrency-first design helps systems cope with unpredictable workloads.

The session covers common failure patterns, resilience at scale, and practical ways to build platforms that continue performing when demand reaches its peak.

Watch the webinar to see how concurrency-first design helps platforms remain reliable when traffic surges.

To conclude

That wraps up our latest webinar round-up. We hope this guide helps you catch up on some of the ideas we’ve been exploring over the past few months.

If something here has sparked your interest, whether it’s concurrency in the BEAM, building resilient architectures, or keeping platforms reliable during periods of peak demand, we’d love to continue the conversation. So get in touch.

Here’s to building systems that stay stable, scalable, and ready for whatever comes next.

The post Erlang Solutions Webinar Round-Up appeared first on Erlang Solutions .