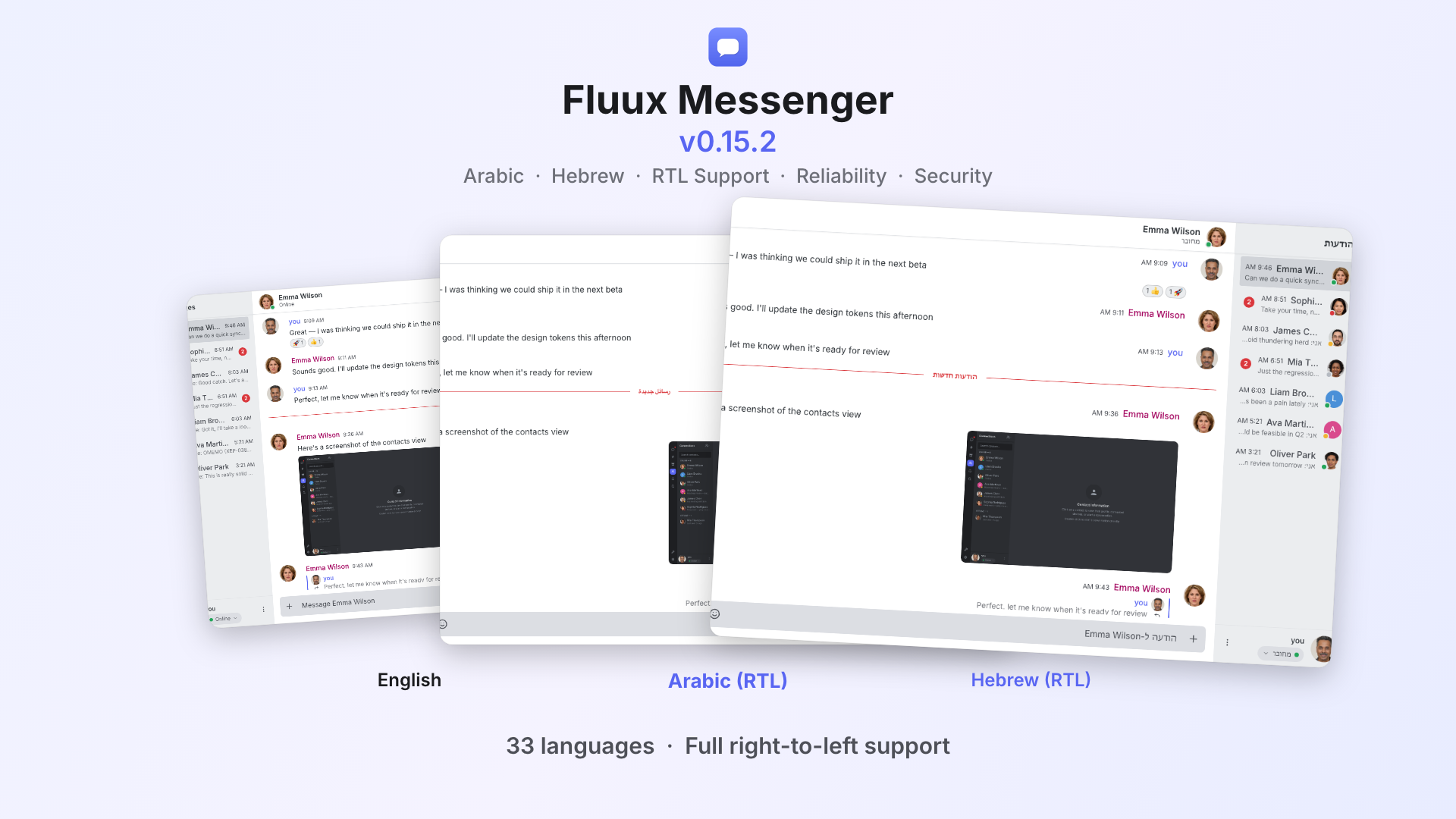

While working on

Borogove

there has been a desire to have full-text search of locally-stored chat message history. For web-based apps the main storage engine in use is

IndexedDB

, which is a rather low-level system and certainly doesn’t have easy facilities for this kind of operation. So what’s the simplest, performant way to implement a full-text search on IndexedDB?

In case you are wondering “

what is full-text search

?” for our purposes we are going to be looking at a search where we want to find any message that contains all the words the user has typed, in any order

Table Scan

While this won’t be our final solution, it is almost always the right place to start. If your data is small (say, under 10k documents) then a table scan is pretty quick, and definitely the simplest option

// Helper so we can use promises with IndexedDB

function promisifyRequest(request) {

return new Promise((resolve, reject) => {

request.oncomplete = request.onsuccess = () => resolve(request.result);

request.onabort = request.onerror = () => reject(request.error);

});

}

const stopwords = ["and", "if", "but"];

function tokenize(s) {

return s.toLowerCase().split(/\s*\b/)

.filter(w => w.length > 1 && w.match(/\w/) && !stopwords.includes(w));

}

function stemmer(s) {

// Optional, https://www.npmjs.com/package/porter2 or similar

}

async function search(q) {

const qTerms = new Set(tokenize(q).map(stemmer));

const tx = db.transaction(["messages"], "readonly");

const store = tx.objectStore("messages");

const cursor = store.openCursor();

const result = [];

while (true) {

const cresult = await promisifyRequest(cursor);

if (!cresult?.value) break;

if (new Set(tokenize(cresult.value.text).map(stemmer)).isSupersetOf(qTerms) {

result.push(cresult.value);

}

cresult.continue();

}

return result;

}

Even though we aren’t doing anything fancy with the database yet, there are still a lot of important building blocks here

First we tokenize the query. This means to chunk it up into “words”. Words don’t have to be exactly real words, they can be consistent parts of words or anything else, so long as you tokenize your query and document text in the same way. Here we use a simple strategy where we trust the

\b

word-break regex pattern, strip any extra whitespace, ignore any empty words or words made of only non-word characters (again, as determined by

\w

, nothing fancy), and also ignore any stopwords. A “stopword” is a very common word that is not useful to include in the search query such as “and”. Mostly, stopwords are useful to avoid blowing up the index size later, but we include it here for now for consistency

Next we

stem

the tokens. This is optional and depends on the kind of search you’re trying to build. The purpose of a stemmer is to make searches for eg “flying” also match messages containing the word “fly”

Then we iterate over all the items in the object store. If you wanted the results in a particular order, you could instead iterate over an index on the store in a particular order. We tokenize and stem the text from each item and check if it contains all the “words” from qTerms, if so it is part of the results.

Use an Index

Now what if you have many messages (one million or more, perhaps) and the simple table scan is just too slow. How can we speed this up? With IndexedDB we only get one kind of index: an ordered index, usually build on a

B-Tree

. This is not exactly what many full-text indexes are built on, but still we can get a very big performance boost without too much more complexity.

First in the

onupgradeneeded

migrator we need to create the index:

tx.objectStore("messages").createIndex("terms", "terms", { multiEntry: true });

This is a “multiEntry” index which means that the index will get one entry for each item in the array stored at this key, rather than indexing on the array as a whole piece. So when we store a new message we need to include the terms as an array:

tx.objectStore("messages").put({ text, terms: [...new Set(tokenize(text).map(stemmer))] });

Now, this does not let us search by our full query, but rather only by one word. How does this help us? Well, we can iterate over only documents which match at least one of the terms in our query. Which one should we pick? Counting items is pretty fast, so let’s pick whichever one has the smallest number of results:

async function search(q) {

const qTerms = new Set(tokenize(q).map(stemmer));

const tx = db.transaction(["messages"], "readonly");

const store = tx.objectStore("messages");

const index = store.index("terms");

// Figure out which search term matches the fewest messages

let probeTerm = null;

let probeScore = null;

for (const term of qTerms) {

const score = await promisifyRequest(index.count(IDBKeyRange.only(term)));

if (!probeTerm || score < probeScore) {

probeTerm = term;

probeScore = score;

}

}

// Using the smallest list of messages that match one term

// Find the ones that match every term

const result = [];

const cursor = index.openCursor(IDBKeyRange.only(probeTerm));

while (true) {

const cresult = await promisifyRequest(cursor);

if (!cresult?.value) break;

if (new Set(cresult.value.terms).isSupersetOf(qTerms)) {

result.push(cresult.value);

}

cresult.continue();

}

// Sort results

return result.sort((a, b) => a.timestamp < b.timestamp ? -1 : (a.timestamp > b.timestamp ? 1 : 0));

}

The operation to count the index for each term is pretty fast, but if you found this prohibitive, you could also store these counts in their own keys and update them as you insert new messages. Once we know which is the smallest, we then do the same scan as before, but only over that much smaller subset. The tokenized and stemmed terms are stored so we can compare against those directly here rather than doing it again.

Now if we want it sorted we have to do it ourselves, just like a DB engine would with this kind of query where the order we want does not match the index order.

On a test set of one million messages, this simple index was enough to take the performance from unusable grinding to almost instant responses, since the number of messages in the smallest term is usually still well under 10k.